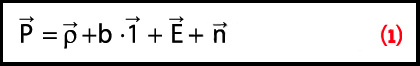

View equations 1-4

View Equations 5-13

Q: What are the differences between least-squares and Kalman filtering?

View equations 1-4

View Equations 5-13

Q: What are the differences between least-squares and Kalman filtering?

A: Most, if not all, GNSS receivers compute their positions using Kalman filtering (more common) or least-squares (less common) estimation algorithms (“estimators”). Kalman filtering also finds application in a wide variety of integrated navigation systems (e.g., GNSS integrated with inertial navigation systems). However, the role of each of these estimators is not always well understood, especially for people new to the navigation and/or estimation field.

To fully explain their differences is both simple and complex, depending on the approach taken. Here, we consider the simpler option because of limited space and because the simpler explanation arguably offers more practical insight. Of course, the simple answer is not mathematically rigorous, and those interested in such rigor are encouraged to flush out the details provided in the myriad of textbooks available on the subject.

So, with this in mind, the differences between least-squares and Kalman are surprisingly minor. This is unintuitive, given the derivation of the different algorithms; least-squares is based on minimizing the measurement residuals (i.e., the difference between the actual and predicted measurements) whereas the Kalman filter is derived based on minimizing the mean-square error of the solution. Nevertheless, this will be borne out in subsequent discussion.

Mathematical Overview

Least-squares and Kalman filtering employ the following measurement model:

Equation (1)

Equation (2)

Equation (3)

Equation (4)

where P0 is the covariance matrix reflecting the uncertainty of the a priori state information. Of course, equation (4) degenerates to equation (3) as the uncertainty in the a priori state information increases (i.e., as P0 tends to infinity). Finally, the covariance matrix of the estimated parameters, P, is given by

Equation (5)

Collectively, equations (4) and (5) represent the least-squares solution. Note that, in general, the non-linear least-squares solution is iterated until the corrections are sufficiently small. However, if the initial estimate of the state vector is sufficiently accurate, this is not necessary.

In contrast to least-squares, Kalman filtering always assumes some a priori knowledge of the state, usually obtained from some past estimates (more on this later). With this in mind, the update equation is usually written as

Equation (6)

where subscript k represents the k-th epoch, the subscript k|k –1 is the estimate at the k-th epoch based on measurements up to epoch k–1 (i.e., the a priori information), and K is the Kalman gain matrix which effectively weights the information in the observations against the a priori knowledge of the states. It is precisely this weighting that “filters” the measurements in order to yield a better solution.

The covariance matrix of the state vector after incorporating the measurements is given by

Equation (7)

where subscript k|k denotes the estimate at the k-th epoch based on measurements up to epoch k.

At face value, the two preceding equations show no obvious resemblance to those for least-squares. However, we can show that the Kalman filter equations can be re-written as

Equation (8)

Equation (9)

These newer equations are the exact parallels of equations (4) and (5) with only a change in notation for the subscripts of P. In other words, given a set of measurements, least-squares and Kalman filtering incorporate the information from the measurements in the same manner and will generate the exact same answer.

So Why Use a Kalman Filter?

As shown previously, the key difference between least-squares and Kalman filtering has nothing to do with how measurements are processed. Rather, it has to do with how the two estimators obtain their a priori information. Whereas least-squares usually obtains this information from external means (e.g., by occupying a known point), Kalman filtering predicts the a priori information using the most recent estimate of the state vector.

This predicted state vector is based on some assumed model for how the state vector changes/evolves in time; this is usually called the system model. This is expressed as

Equation (10)

where Φk,k+1 is the transition matrix that propagates the state at epoch k to epoch k+1. The transition matrix is usually based on the physics of the systems (e.g., velocity multiplied by time gives change in position). Although not discussed here, the discrete-time formula in equation (10) is often — though not always (refer to the September 2010 issue of this column by Lo Presti et alia for an example) — derived from a continuous-time state space model.

The covariance matrix of the predicted state is given by

Equation (11)

where Q is the process noise matrix that ultimately accounts for the uncertainty/errors in the assumptions used to derive the transition matrix.

To illustrate, let’s consider a one-dimensional example of trying to position a train. We define our states to be the position, p, and velocity, v, of the train, namely

Equation (12)

Since trains are large and heavy, they do not accelerate or decelerate very quickly; so, we can assume that the train is moving with approximately constant velocity. The transition matrix can thus be written as

Equation (13)

where Δt is the time between epochs k and k+1. Substituting this term into the first term on the right hand side of equation (11) propagates the uncertainty of the states forward in time. This is necessary because, if the states are not known perfectly at some time in the past, it follows that the prediction of those states will introduce larger uncertainties.

To determine Q, we need to revisit our assumption that the train is moving with constant velocity. Although this may be a reasonable approximation over short time periods, it is not always true (i.e., the train will eventually stop). This means that, in addition to the propagation of the errors from the previous epoch, we also need to add some uncertainty to describe the fact that our assumption is not perfect.

For the case at hand, the uncertainty may be small, precisely because the train’s momentum is so high. However, if we were positioning race cars instead of trains, the value of Q would have to be larger to accommodate the fact that the race car can change its speed much more quickly.

Although the objective of this article is not to give a detailed description of how to select Q (this is often covered in several chapters of textbooks and even then requires practical experience to fully appreciate), the key point is that selecting Q directly affects the performance of the filter. In the extreme, if Q is zero, the filter essentially averages information over time, that is, the filter becomes a cumulative averaging algorithm.

Conversely, if Q is selected to be infinite P–1k|k–1, in equations (8) and (9) becomes zero and the Kalman filter degenerates to the least-squares solution in equation (3).

So, the real benefit of a Kalman filter relative to least-squares is that it provides additional information to the system based on assumed knowledge of how the states (e.g., position, velocity, etc.) change with time. If the assumptions regarding the evolution of the states are correct and/or if deviations from the assumed behavior can be accommodated through some form of adaptation, the Kalman filter will typically provide temporally smoother and more accurate solutions.

Two other benefits are also realized by Kalman filters, relative to least-squares. First, if the uncertainty in the a priori estimate is not infinite, we can update the state vector using fewer measurements than there are states.

For example, for GNSS positioning, we can update a Kalman filter using pseudoranges from one or two satellites, whereas a least-squares update in this case would be impossible because of insufficient measurements (assuming no a priori information is available, which is likely for least-squares except in specific cases such as static positioning).

Second, we can estimate parameters in a Kalman filter that may not be completely observable using least-squares. A good example of this is the ability to use GNSS pseudoranges to estimate position and velocity in a Kalman filter, whereas least-squares could only estimate position using the same data. Intuitively, this is possible in a Kalman filter because successive position estimates can be used to infer velocity.

No Silver Bullets

Despite the potential benefits of Kalman filtering, we need to exercise caution before blindly applying the algorithm in all scenarios. The reason is that if the assumed behavior of the states is incorrect, the filter will still try to make the results fit the assumed behavior anyway — after all, that is what we are instructing it to do! This “self-fulfilling prophecy” behavior can mask certain effects (both good and bad) and potentially degrade performance.

A good example of this is in urban canyons where, if a receiver can only track two satellites, the Kalman filter will usually predict forward motion. However, if the receiver turns a corner, it may take several seconds (or longer) for the filter to identify this condition resulting in an “over shoot” of the trajectory at the corner.

By extension, computing a least-squares estimate at every epoch — although much noisier and less accurate — does have the benefit of providing insight into the “raw” data quality, that is, in the absence of any filtering. Often, this information can then be used to better determine the parameters for a suitable Kalman filter.

Summary

In this article we showed how least-squares and Kalman filtering estimators handle measurements the same way and that the main difference between them is that Kalman filters provide information about how the states change with time. This additional information, if correct, will indeed improve estimation accuracy.

Nevertheless, an epoch-by-epoch least-squares solution still has an important role in assessing the raw quality of the data that is not usually possible using a Kalman filter. Depending on the application, one or both approaches may prove useful, or even complementary.